Introduction

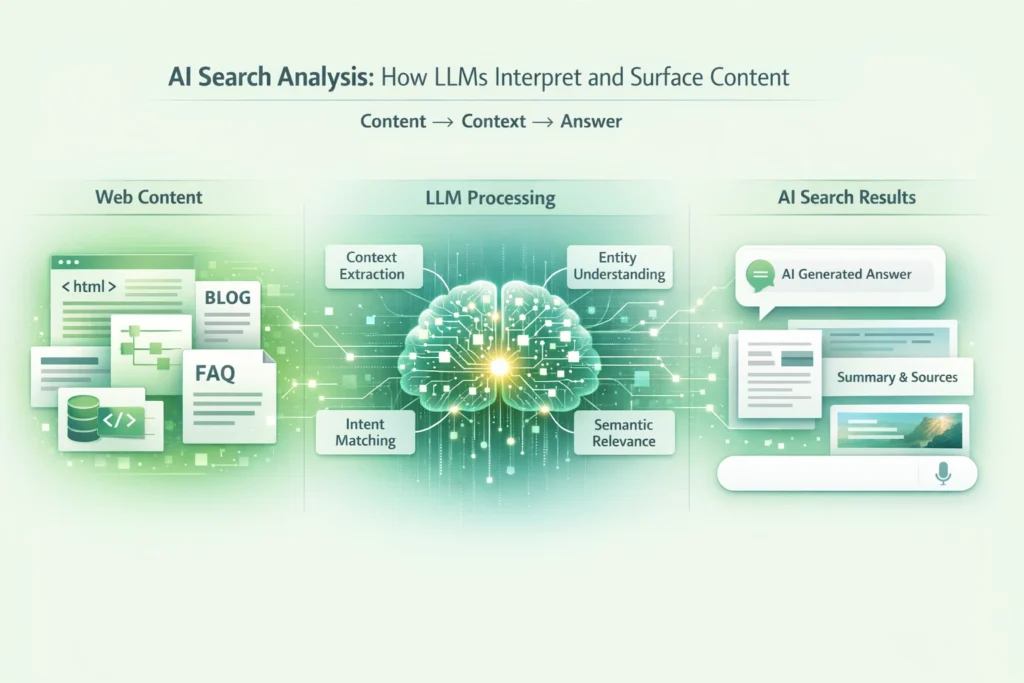

AI search systems do not retrieve content the way traditional search engines do. They interpret, abstract, and recombine information before deciding what to surface. For SEO leaders, this shift introduces a new challenge: visibility is no longer determined solely by ranking mechanics, but by how well content can be understood, trusted, and reused by large language models.

AI search analysis is therefore not an extension of keyword research or SERP tracking. It is an examination of how LLMs ingest content, identify authority, and decide which sources are safe to synthesize into answers. Organizations that fail to understand this layer risk becoming invisible even when rankings appear stable.

To operate effectively in AI-influenced search environments, SEO teams must understand how interpretation happens before surfacing ever begins.

How LLM-Based Search Differs From Traditional Search

Traditional search engines evaluate documents primarily for retrieval. AI-driven search systems evaluate them for comprehension.

This distinction changes the role of content entirely.

Retrieval Versus Interpretation

Classic search ranks pages to present choices. LLM-based search attempts to resolve intent directly. Content is parsed, summarized, and abstracted before any decision about citation or attribution is made.

Pages Are Inputs, Not Destinations

In AI search, a page is often not the endpoint. It is a data source contributing to an answer. This means partial inclusion is common, and full-page visits are optional.

Authority Is Evaluated Before Relevance

If a source is not deemed trustworthy or internally consistent, relevance alone is insufficient. AI systems are risk-averse by design.

How LLMs Ingest Content

Understanding ingestion is critical for designing content that survives AI interpretation.

Semantic Chunking

LLMs break content into conceptual units rather than reading pages linearly. Headings, paragraph structure, and logical sequencing determine how meaning is segmented.

Contextual Weighting

Not all sections of a page are treated equally. Definitions, explanations, and constraints carry more weight than introductions or promotional language.

Cross-Document Comparison

AI systems evaluate consistency across multiple sources. Content that contradicts other authoritative material without justification is less likely to be surfaced.

Why Some Content Is Invisible to AI Search

Many sites produce content that performs adequately in traditional search but fails to appear in AI-generated answers.

Common causes include:

- Ambiguous or circular explanations

- Overly marketing-driven language

- Lack of clear conceptual boundaries

- Inconsistent terminology across pages

From an AI perspective, this content increases uncertainty and is therefore excluded.

Interpretability Is the New Optimization Layer

AI search systems favor content that can be interpreted deterministically.

Interpretability depends on:

- Explicit definitions of concepts

- Clear cause-and-effect explanations

- Logical progression without hidden assumptions

This does not require simplification. It requires precision.

How AI Decides What to Surface

Surfacing is a selective process driven by confidence and coverage.

Confidence Thresholds

AI systems are more likely to surface content when multiple sources reinforce the same interpretation. Singular or uncorroborated perspectives are treated cautiously.

Coverage of Intent

Content that addresses only part of an intent may be used as supporting material, but not as a primary source. Comprehensive, bounded explanations are preferred.

Risk Avoidance

For sensitive or complex topics, AI systems avoid sources that overstate certainty or ignore trade-offs. Balanced explanations signal expertise.

Entity Understanding and Topical Coherence

LLMs reason heavily through entities and their relationships.

Content ecosystems that clearly establish:

- Who the organization is

- What domains idoes t operate in

- How concepts relate to one another

are easier for AI systems to trust and reuse.

Fragmented content strategies weaken this understanding, even if individual pages are well-written.

Why Formatting Alone Is Not Enough

There is a misconception that AI visibility can be improved primarily through formatting tricks such as lists, tables, or concise answers.

While structure helps ingestion, it does not compensate for a weak substance. AI systems evaluate meaning before presentation.

Well-structured ambiguity is still ambiguity.

AI Search Analysis as an Ongoing Discipline

Analyzing AI search visibility is not a one-time audit.

It requires continuous evaluation of:

- Which content is being referenced or paraphrased

- Where interpretations diverge from the intended meaning

- Which topics lack clear authoritative sources

This analysis informs content refinement, consolidation, and governance decisions.

Aligning Content Teams With AI Interpretation

AI search analysis exposes gaps between how organizations think about their content and how it is actually understood.

High-performing teams:

- Design content around questions AI must answer

- Standardize terminology and definitions

- Reduce internal contradictions across assets

This alignment improves both AI visibility and human comprehension.

Measuring Success Beyond Rankings

Traditional SEO reporting is insufficient for AI search analysis.

More relevant signals include:

- Inclusion in AI-generated responses

- Consistency of interpretation across prompts

- Brand and entity mentions without direct links

These indicators reflect influence rather than navigation.

Preparing for Continued Evolution

AI search systems are still evolving. Interpretation logic, citation behavior, and surfacing rules will change.

Organizations that focus on clarity, authority, and internal coherence will adapt more easily than those chasing specific behaviors.

Conclusion

AI search analysis is about understanding how meaning is extracted, evaluated, and reused.

LLMs do not reward volume, formatting tricks, or isolated optimization. They surface content tthat hey can confidently interpret and trust.

SEO teams that design content for interpretability rather than retrieval will maintain visibility as search shifts from ranking pages to answering questions.